Web app + PWA

One concept spanning desktop marking and mobile capture/review

Inclusion Solutions-led concept, built with Sensigence as technology partner

Hosted on Manus for rapid review, sharing, and future custom subdomain use.

This showcase packages the proof of concept, commercial case, and product-direction materials for an AI-assisted exam-marking platform that could begin as a web app and grow into a teacher-friendly PWA.

Web app + PWA

One concept spanning desktop marking and mobile capture/review

Offline library

Reference layer to reduce repeat model work and improve marking consistency

Commercially testable

Pricing, affordability, and school-adoption economics already modelled

Commercial intent

A credible POC before heavy build spend

Validate workflow, handwriting handling, moderation, and unit economics before committing to a broader school rollout.

Operating model

Retrieval-first, guardrailed, teacher-controlled

The concept assumes an offline knowledge library, confidence-led routing, and human override at the point of judgement.

Teachers are under pressure to mark quickly, apply mark schemes consistently, and still generate feedback that stands up to moderation. The commercial opportunity is strongest when the product does not promise magic, but instead proves it can reduce repetitive effort, structure professional judgement, and stay affordable enough for whole-school adoption.

The concept focuses on high-friction tasks such as script ingestion, mark-scheme comparison, candidate answer review, moderation flags, and feedback drafting. The aim is not to replace teachers, but to compress the repetitive part of the marking workflow.

The strategy pack grounds the concept in real paper, mark-scheme, examiner-report, and exemplar-answer materials so the product direction stays aligned to how marks are awarded in practice rather than how a generic model guesses they might be.

The commercial case assumes tight API guardrails, hard usage caps, and an offline knowledge library that reduces unnecessary model calls. That creates room for a lower price point and a thin-but-scalable profit slice across many schools.

These are concept visuals rather than final interface screens, but they are intentionally close enough to product reality to help the client, partner, and school stakeholders discuss workflow, confidence, portability, and brand direction.

The desktop concept prioritises multi-panel review, moderation control, extracted handwriting verification, and confident mark-scheme comparison for teachers and departmental leads working through batches of scripts.

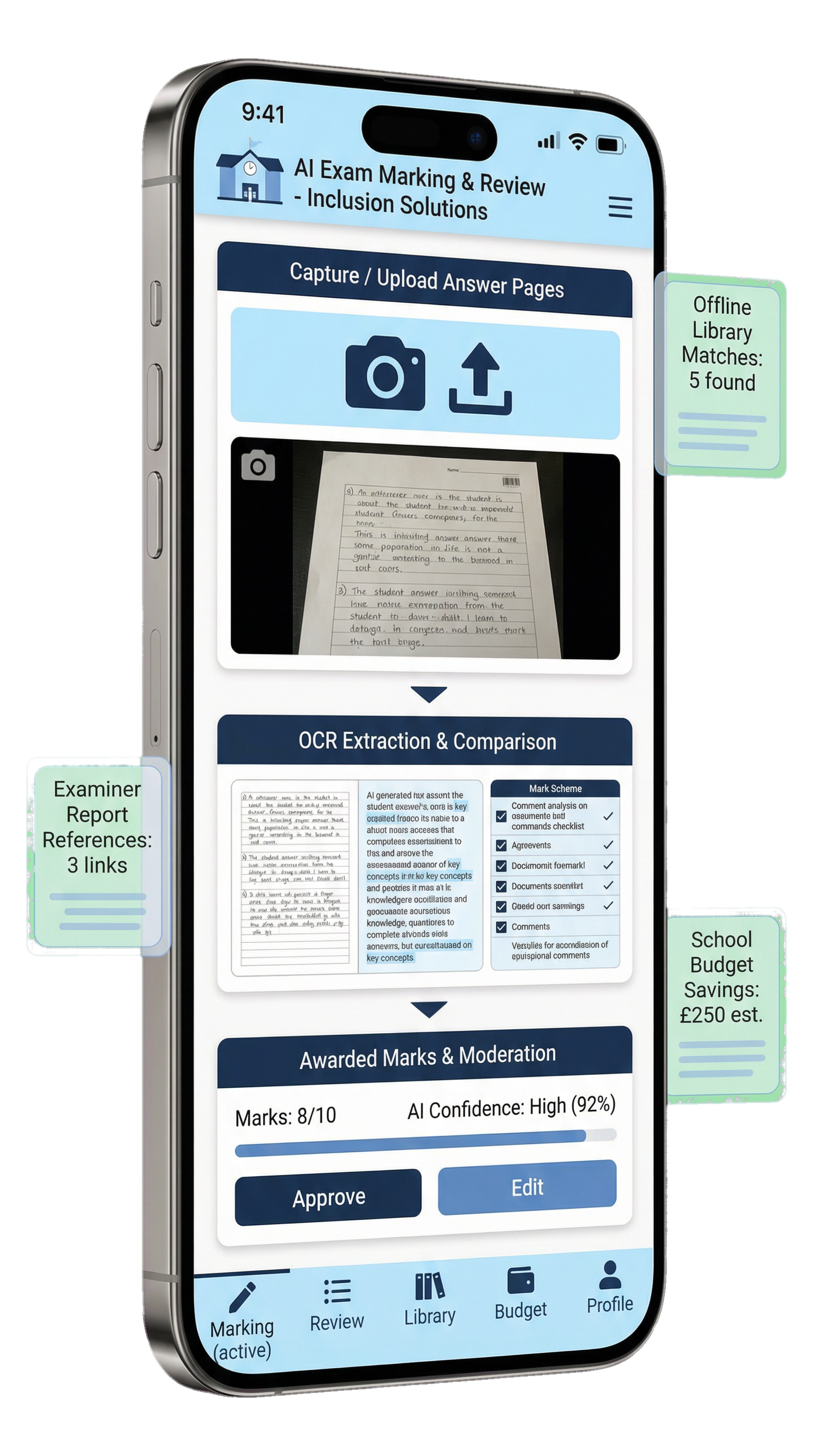

The mobile concept is not a cut-down novelty. It is framed as a practical companion for capturing handwritten answers, checking AI interpretation, approving marks, and reviewing flagged responses with a permanently visible mobile navigation pattern.

The product proposition is easier to trust when the user journey is simple and the technical sophistication stays mostly behind the scenes.

Teachers upload a paper, mark scheme, and candidate responses, or select known past-paper sources from a structured library.

The system first retrieves relevant mark schemes, examiner commentary, exemplars, and calibrated prior patterns before deciding how much model reasoning is really needed.

Low-risk structured questions can be processed with tighter templates, while longer and less certain answers are escalated with confidence scoring and moderation prompts.

Teachers can approve, edit, or flag marks, with clear evidence trails and a path to moderation rather than a black-box score.

For this client conversation, the key point is not whether the business starts with a large headcount. It is whether a lean, well-controlled service could save enough teacher time to justify a modest annual school subscription, then scale only once demand is proven. The revised model therefore assumes a small phase-one team, retrieval-first processing, and clear usage guardrails rather than an expensive corporate footprint.

£240k

Lean annual operating base for a small phase-one business rather than a heavy scaled-company setup

~£1k

Illustrative annual spend per school on the core tier at roughly 5,000 scripts a year

~300

Approximate number of active core-tier schools needed before the lean model breaks even

The commercial case is easier to trust when it reads as saved time, consistent moderation support, and affordable annual spend rather than startup theatre or aggressive growth language.

The revised model assumes a small operating footprint first, then adds people only when enough schools are active to justify the extra support and delivery cost.

Trust-level or department-level buying matters because onboarding, support, and training can then be spread across more scripts without raising the price sharply per school.

Lean cost base

This is intentionally framed as a small operating business. It is enough to build, support, and govern the proposition without presenting the client with an alarming corporate overhead story.

| Cost area | Annual assumption | Why it is there |

|---|---|---|

| Product and technical lead | £95k | Owns product direction, platform decisions, and delivery control. |

| Delivery engineer | £70k | Builds the workflow, integrations, and teacher-facing improvements. |

| Education, compliance, and QA support | £30k | Fractional specialist input rather than a full large back-office team. |

| Software, admin, insurance, and lean selling overhead | £45k | Covers the operating basics without assuming a heavy go-to-market machine. |

| Illustrative total fixed annual cost | £240k | A lean phase-one setup designed to test commercial viability before larger staffing commitments. |

Teacher time-saved calculator

Adjust the assumptions below to estimate how much marking time could be taken out of the week if the system helps with first-pass review, rubric recall, and moderation support.

Hours saved each week

8.0

Based on the weekly script volume and time saved on each one.

Teacher days regained each year

34.3

Shown as 7-hour working days to keep it easy to picture.

Annual hours returned

240

Useful for discussing value with departments, heads of faculty, or trust buyers.

Marking evenings recovered

120

Shown as 2-hour evening sessions to make the benefit easier to visualise.

Teacher-friendly viability view

The table below is intended to make the commercial story easier to read than a finance workbook. It shows the kind of annual spend a school might face and the rough scale needed for the service to stand on its own feet.

Swipe sideways to read the full table.

| Offer level | Indicative annual spend per school | Approximate break-even scale | How to read it |

|---|---|---|---|

| Budget | ~£750 | ~437 schools | Possible later, but tight for a first commercial offer. |

| Core | ~£1,000 | ~300 schools | The clearest day-one story for affordability and viability. |

| Premium | ~£1,250 | ~229 schools | Works if extra moderation insight and workflow value are visible. |

| Benchmark reference | ~£3,000 | ~86 schools | Shows there is room below existing reference pricing if the build stays disciplined. |

If deeper scenario modelling is needed, ask Craig for the editable XLSX workbook.

What a week in school could look like

This is a plain-English example rather than a promise. It is designed to help a teacher picture how the tool might fit into a real marking week without removing professional judgement.

At a glance

~120 scripts

A typical weekly batch for a busy teacher or shared department flow.

At a glance

~8 hours back

Illustrative weekly time returned when first-pass review and moderation support remove repetitive effort.

At a glance

Teacher signs off

The system narrows the pile, but the teacher still reviews exceptions, edits marks, and owns the judgement.

Monday

Upload the batch and match the mark scheme

The teacher or department lead uploads the paper, mark scheme, and answer scripts. The platform organises the batch and pulls the right reference material together first.

Tuesday

Let the system handle the repetitive first pass

Straightforward responses can be checked against the expected answer pattern, while more complex answers are flagged for closer review rather than treated as certain.

Wednesday to Thursday

Review the exceptions instead of every single script

The teacher spends time where it matters most: ambiguous handwriting, borderline marks, unusual answers, and moderation-sensitive cases.

Friday

Approve, adjust, and keep an evidence trail

The teacher finalises marks, checks feedback wording, and retains a clearer record of why decisions were made for moderation or internal review.

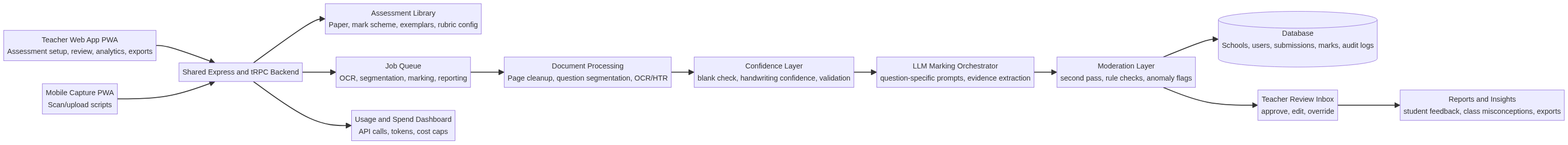

Architecture snapshot

The architecture note now opens as a dedicated landscape PDF so the workflow can be reviewed more comfortably in one wide view.

Explore the downloadable architecture pack

The materials assembled so far already support a credible board-level conversation. The strategy pack defines the product opportunity, the architecture note shows how an offline knowledge library can lower cost and improve consistency, and the financial model demonstrates that affordability and margin can coexist if the service is engineered carefully from the start.

A POC can validate handwriting handling, moderation confidence, and routing logic before heavy build cost is committed.

A school-facing proposition becomes stronger when backed by working economics rather than AI theatre.

If the unit economics hold, the model becomes suitable for volume sales with recurring institutional value.

This is designed as a decision pack rather than a loose document set. The page brings together product direction, architecture, affordability thinking, and modelling outputs so the client can assess whether to commission the next phase confidently.

The main strategy document covering feasibility, architecture direction, feature scope, handwriting handling, and product framing.

A shorter summary for non-technical review, suitable for early stakeholder circulation.

The first-pass proof-of-concept architecture showing how ingestion, retrieval, LLM routing, and review fit together.

Scenario-based thinking on cohort size, LLM cost, affordability, and first-pass contribution margins.

A branded PDF summary of the model for stakeholder review, now framed around a leaner phase-one cost base. The editable XLSX workbook can be requested separately from Craig if deeper scenario testing is needed.

The logical next step is to turn this into a lightweight working prototype that proves ingestion, handwriting review, confidence-led routing, moderation workflow, and price discipline against a narrow subject scope.